No one trusts an AI without proof of training data

Auditability requires evidence

No transparency, no trus

A transparent AI lifecycl

on:mint delivers it.

AI Audit Trails

Case Study: From training to approval

01

Anchor training data in a tamper-proof way

02

Audit the training process end to end

03

Secure the model immutably

04

Log usage continuously

• Use Cases

How on:mint protects your sensitive data streams

Autonomous Driving: End-to-End Documentation

Medical AI: Transparent Documentation

Credit Scoring: Making Decisions Verifiable

Reproducible Experiments in AI Research

Safeguarding Administrative Decisions Made by AI

• Before / After

Transparency instead of a black box

• CREATE EVIDENCE

Put an end to the AI black box

FAQs

What are AI Audit Trails and which problem do they solve?

AI Audit Trails provide cryptographically verifiable traceability across the entire AI value chain, from training data and training runs to specific model outputs.

They make the following questions tamper-proof and auditable:

- Which data was a model trained on?

- Which code and model version was used?

- Under which parameters was the model trained or used for inference?

- Which model produced which result, and when?

All relevant steps are logged automatically, cryptographically secured, and made immutably verifiable via blockchain and gated IPFS.

This establishes transparency and accountability in AI systems.

How does on:mint prove which training data was used?

Before training begins, the training dataset is cryptographically sealed once.

Technically, this is implemented using a Merkle tree:

- The dataset is split into individual blocks.

- Each block receives a hash, a cryptographic fingerprint.

- These hashes are combined into a Merkle tree whose root uniquely represents the full dataset state.

The Merkle tree reference, together with metadata such as source, license, and timestamp, is stored in on:mint’s gated IPFS system and additionally anchored on the blockchain.

This makes it provable that a specific dataset existed in exactly this form and was referenced for training.

No claim is made that every individual data point can later be recovered from the model. With modern AI systems, this is not technically feasible today.

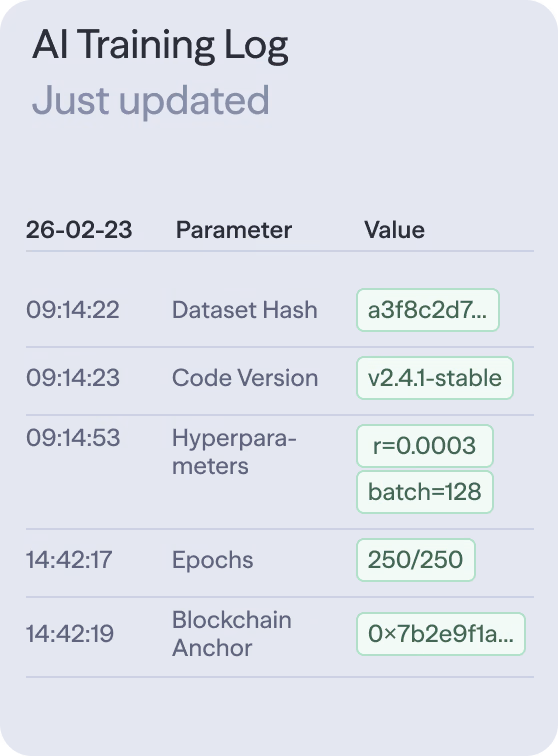

What is documented during training, and what is not?

During training, on:mint documents the conditions and configuration of the training run, referred to as a Run Proof, including:

- Reference to the training dataset (Merkle tree)

- Code version, such as a Git commit or container version

- Training parameters and configuration

- Initial random parameters (seeds)

- Training outcome

AI Audit Trails therefore enable verification of the training conditions, not exact reproduction of the same model. The objective is traceability and auditability, not mathematical reproducibility.

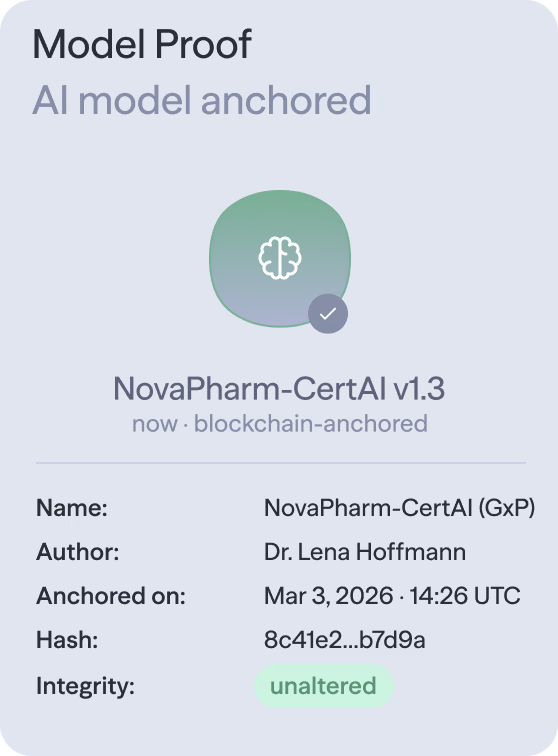

How is it ensured that a model is uniquely identifiable?

After training, each model version receives a unique cryptographic identity, known as a Model Proof:

- The model weights are hashed.

- This hash is stored in gated IPFS and anchored on the blockchain.

- Any change to the model, no matter how small, results in a different hash.

As a result, it is always verifiable which exact model version was used. Undetected manipulation or silent changes are technically impossible.

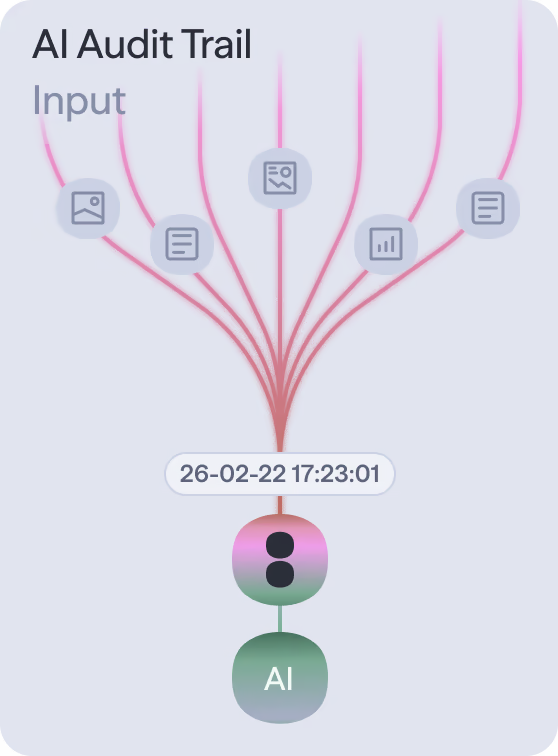

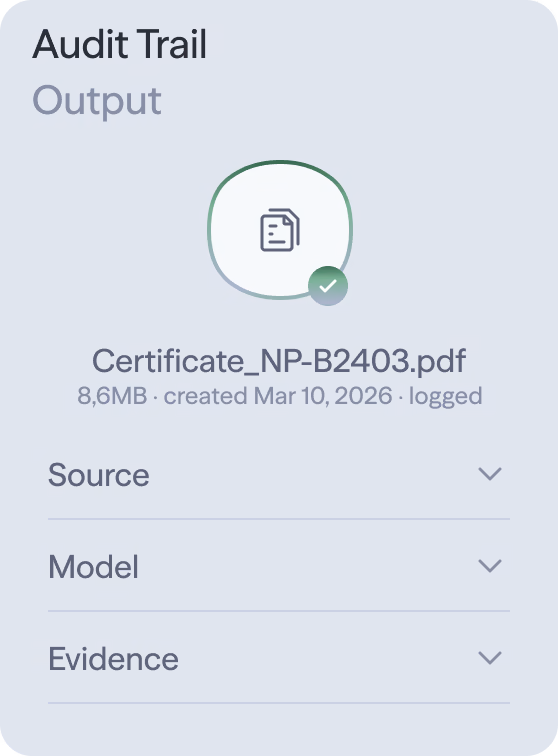

How are individual AI outputs made traceable?

During model usage, integrated AI logging automatically documents each relevant inference as an Inference Proof, including:

- Hash of the input, such as a prompt, image, or sensor data

- Hash of the output

- Model ID and model version

- Parameters, such as temperature or sampling seed

- Timestamp and usage context

These logs are periodically bundled into audit packages, digitally signed, stored in IPFS, and anchored on the blockchain via smart contracts.

As a result, it becomes provable that a specific output was generated by a specific model, from a specific input, under specific parameters.